- Blog

- Kapoor and sons full movie dailymotion

- Rotate objects in game corp dx

- Internet speed meter pro app

- How to activate adobe cc reddit

- Onetouch diabetes management software program

- Used midtronics battery tester

- Bhaag milkha bhaag english subtitles

- How to warp text vinylmaster pro

- Autodesk inventor 2015 manual pdf

- Intuos2 driver -

- Cara menggunakan cheat engine ninja saga

- Toontracks ezdrummer 2

- Web proxy list

- Cloudberry backup to s3

- Ravan in ramayan

Cloudberry backup to s3 code#

With data protection functionality (against hardware failures using erasure code and bitrot detection), highly available nodes (in distributed configurations) and some other nice features it is still super quick and easy to setup and start using.

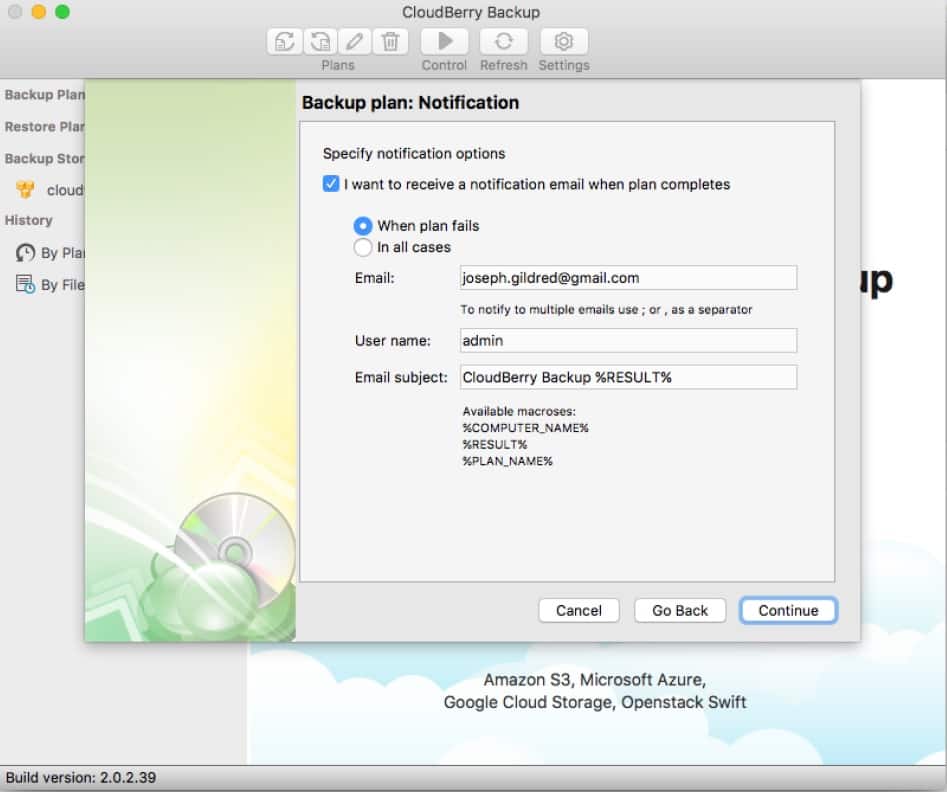

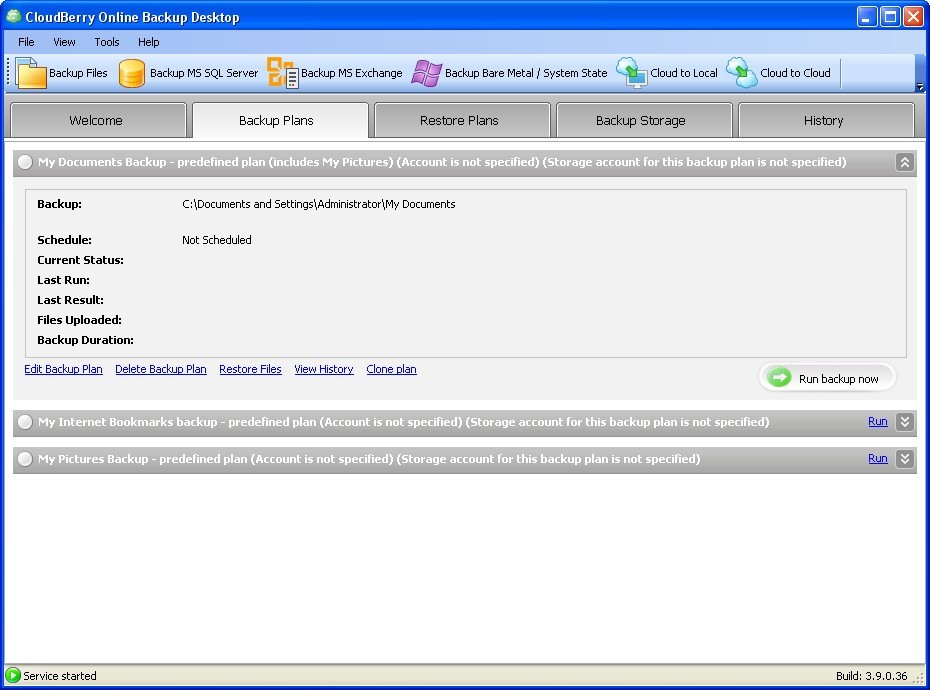

It fits very well for development and testing purposes, at the same time great thing to deliver static assets (like images, videos and documents) and is great for defining backup targets (repositories). With AWS Signature v2 / v4 it comes with variety of options for developers and architects including SDK, CLI, web UI to access user’s stored content. Minio is an object storage service, which is fully compatible with AWS S3 API. There are some other products like Remote Assistance, CloudBerry Drive, native NAS (QNAP and Synology) applications, Data De-duplication server and G Suite / Office 365 backup tools (both based on native API methods from Google and Microsoft respectfully). With ease of use, affordable price and flexible agent options MBS comes with web UI fully and 24x7x365 available system managed by CloudBerry DevOps team. MBS is SaaS designed to simplify daily routine of IT departments and service providers (partners with services for other companies) by bringing backup offerings to the next level. With two major product lines (Standalone and Managed Backup Service aka “MBS” in short) company offers tools for cross-platform (Windows, Linux and Mac) data protection (automated backup) and easy-to-use file management tools ( CloudBerry Explorer) with major focus on modern cloud storage providers and technologies. Since 2011 CloudBerry Lab offers Backup and File Management tools for IT pros and computer users.

Cloudberry backup to s3 free#

Quick example where you would follow this guide, - you offer backup and DR services, you have bunch of unused disks (JBOD), few NASes and huge spot of free space on one of your legendary legacy server, which is still alive and you can't just throw it away since it is still powerful and can do the job. Minio gets more and more scores as part of seamless storage system for self-hosted configurations. And this is where something else come up. I would consider above protocols as legacy since they have number of limitations and slow due to architecture of data transfers. But, there is big BUT! Time flies, we can afford tens or even hundreds mbps bi-direction circuits, where target configuration apart disk IOPs may become primary bottleneck. FTP, SCP, WebDav and some other proprietary protocols have been here forever, where simplicity and flexibility made them number one in data protection and management fields as primary target configurations. Here at CloudBerry, where one of the goal I have is to help partners decide, pick and build right configuration for dealing with computers data when they need backup solution.

Cloudberry backup to s3 password#

Table of contents postgresql jquery locale issue games cloudfront aws ec2 microsoft free tier iso aws s3 image pbx psexec fail2ban g729 minio jekyll deployment backup ntfs cloudberrylab orchestration macbook html materializecss wysiwyg nokogiri tags acts_as_toggable paperclip swap fix digitalocean asterisk mixmonitor mount bucket administration windows s3 compatible https ec2 nested hyper-v scripts bat certbot capistrano ssl jumpbox meetup debian macos password awsrus certification cloudacademy exchange rails efs static site amazon s3 python amazon sns amazon efs aws ses smtp seo rails console linux mac amazon powershell chrome erudinsky cloud VMware virtualisation blog containers storage google virtualbox ruby cli hyper-v docker Azure DevOps kubernetes CKA CKAD azure Azure Kubernetes Service Azure Container Registry Reason I put them here - recall later or help someone else with similar challenge. Most of the posts highlight what I am focused on and express work and personal experience. I am Palo Alto Networks by day and author of this blog by night. ER's cards - DevSecOps and Cloud Native Enthusiast